Introduction

Artificial Intelligence (AI) is undoubtedly a vital tool for cybersecurity. It enables threat detection and critical vulnerability aspects within an organizational network. AI further helps to identify patterns showing a security breach. The main advantage of AI in the cybersecurity space is its innate ability to detect and respond to real-time threats. So, AI-enabled security systems facilitate network monitoring by identifying crucial endpoints and detecting devices for specific anomalies responsible for security compromise.

The primary concern with using OpenAI platforms is the potential for unauthorized access and data breaches. There might be a risk of information being hacked, resulting in severe cyberbullying, identity theft, and security breaches. An OpenAI platform, viz., ChatGPT has emerged as a popular chatbot. It is developed by OpenAI and performs text generation and language translation tasks. It also analyses voluminous data and enhances cyber threat intelligence. Organizations can have timely insights into cyber threats, enabling proactive actions prior to cyberattacks.

Effects of OpenAI in the Cybersecurity Domain

Cybersecurity is essential for organizations as it protects devices, data, and personal information from cyberattacks. It also enables organizations to avoid online scams, follow compliance, and protect their valuable reputations. Cybersecurity reduces cyber threats like malware attacks, software supply chain attacks, social engineering attacks, distributed denial-of-service (DDoS) attacks, and password attacks, offering timely recovery from cyberattacks.

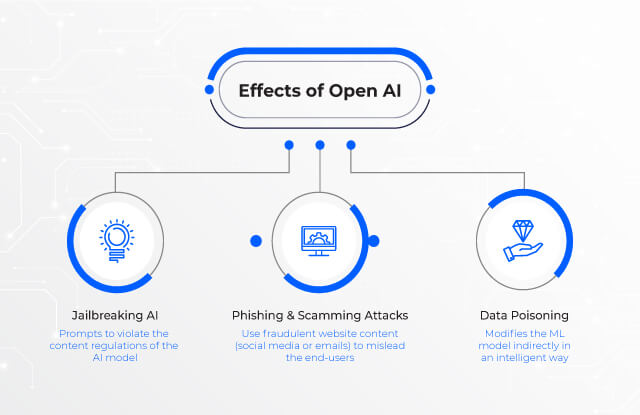

OpenAI in the cybersecurity domain reduces the time taken to accomplish time-intensive tasks. It not only scans the data but also identifies potential threats, thereby reducing false positives and filtering non-threatening processes. Although OpenAI enables experts to focus on critical security activities, it is still open to cyberbullying activities like jailbreaking chatbots, data poisoning, and phishing and scamming attacks.

Effects of Open AI.

| Jailbreaking AI | Data Poisoning | Phishing and Scamming Attacks |

|---|---|---|

| Prompts to violate the content regulations of the AI model | modifies the ML model indirectly in an intelligent way | use fraudulent website content (social media or emails) to mislead the end-users |

Jailbreaking AI

Jailbreaking refers to challenges related to OpenAI platforms like ChatGPT. It comprises prompts to violate the content regulations of the AI model, thereby misusing the content. The OpenAI platform enables the chatbots to envision the content based on sentence prediction, training data, and keyword occurrence.

OpenAI platforms are easier to use and faster to access. But the platforms are often associated with vulnerabilities and can be easily misused by cybercriminals. Cybercriminals can deploy prompt injections, prompting the language model to ignore directions and compromise cybersecurity. For example, cybercriminals can prompt the chatbot to act as another AI model and misuse it by ignoring the original AI instructions. OpenAI platforms are using a methodology known as adversarial training. This training enables OpenAI’s chatbots to search for ways to break ChatGPT.

Data Poisoning

Data poisoning is a type of adversarial attack involving the manipulation of training datasets by injecting poisoned (polluted) data. Unlike adversarial attacks that involve direct modification of the machine learning (ML) model, data poisoning modifies the ML model indirectly in an intelligent way. It involves data tampering by adding misleading data to the machine learning data and generating undesirable results. This is to control the behavior of the trained machine learning model and provide false output. Moreover, the algorithm learns from the poisoned data and draws incorrect conclusions. Recent research shows that cybercriminals have successfully used AI to attack user authentication and identity validation, including audio and video hacks.

Phishing and Scamming Attacks

Cybersecurity experts have experienced many cases wherein ChatGPT’s credentials from OpenAI were spoofed on various phishing websites, spreading malware or causing data theft incidents like stealing credit card information. Organizations have also observed that considering the Internet to be a trusted source for ChatGPT makes the chatbot vulnerable to cyberattacks.

A cyberattack, viz., indirect prompt injection, permits a third-party user to alter a website by purposely adding hidden text, thereby changing the AI’s behavior. Cybercriminals use fraudulent website content (say, social media or emails) to mislead the end-user with the help of hidden prompts. Once the user is lured, cybercriminals can easily manipulate the AI model and execute cybercrimes like data theft. Furthermore, scammers might use ChatGPT-related social engineering and perform identity theft. For example, scammers can trap victims by asking for confidential information, such as their email address and credit card information.

Ensuring AI-based Cybersecurity

The effect of OpenAI platforms on cybersecurity can be positive and/or negative. For instance, if an advanced AI platform such as ChatGPT enhances the cybersecurity of a network, it also makes the network vulnerable to cyber threats. However, it is vital to understand and acknowledge that the effects of OpenAI platforms can be misused for malicious purposes. There has to be an effective collaboration between AI-based research organizations and the cybersecurity sector. This collaboration enables effective stability between AI capability and cybersecurity risks.

Thus, ethical guidelines are essential for the use of AI in cybersecurity, along with precautionary steps to prevent the malicious use of AI-based solutions. Moreover, thorough research into developing AI-based systems will prove beneficial in detecting and preventing cyber threats.

Other Blogs

From Nuclear Centrifuges To Machine Shops: Securing IoT

IoT or ‘the internet of things’ has been around for a lot longer than the buzzword

Read More

Demystifying XDR

As the capabilities of threat actors have increased so have the tools which we utilize to detect and respond to their activities.

Read More

Cybersecurity In A Post Pandemic World

As many cyber security practitioners will tell you, the most imminent and dangerous threat to any network are the employees accessing it.

Read More